Mastering LASSO Cox Regression: A Step-by-Step Guide to Robust Prognostic Biomarker Selection in Cancer Research

This comprehensive guide demystifies the application of LASSO (Least Absolute Shrinkage and Selection Operator) Cox regression for identifying and validating prognostic biomarkers from high-dimensional genomic, transcriptomic, and proteomic data.

Mastering LASSO Cox Regression: A Step-by-Step Guide to Robust Prognostic Biomarker Selection in Cancer Research

Abstract

This comprehensive guide demystifies the application of LASSO (Least Absolute Shrinkage and Selection Operator) Cox regression for identifying and validating prognostic biomarkers from high-dimensional genomic, transcriptomic, and proteomic data. Targeted at biomedical researchers and drug development professionals, the article begins with foundational concepts linking survival analysis to regularization. It then provides a detailed, practical workflow for model implementation, covering critical steps from data pre-processing to coefficient shrinkage. We address common analytical pitfalls, optimization strategies for hyperparameter tuning, and methods for internal and external validation. Finally, we compare LASSO Cox to alternative feature selection methods, such as Ridge regression and Elastic Net, discussing their relative strengths in building parsimonious, interpretable, and clinically translatable prognostic signatures for precision oncology.

From Survival Analysis to Sparsity: Understanding the 'Why' Behind LASSO Cox Regression

The High-Dimensional Data Challenge in Modern Biomarker Discovery

Modern high-throughput technologies (e.g., genomics, proteomics) generate datasets where the number of potential predictor variables (p, e.g., genes, proteins) far exceeds the number of observational samples (n). This "p >> n" paradigm creates statistical challenges for identifying robust, prognostic biomarkers, including overfitting, multicollinearity, and poor generalizability. Within the thesis on LASSO (Least Absolute Shrinkage and Selection Operator) Cox regression, this methodology is positioned as a critical solution for performing simultaneous variable selection and regularization to derive sparse, interpretable prognostic models from high-dimensional biological data.

Table 1: Common High-Throughput Platforms in Biomarker Discovery

| Platform | Typical Dimensions (p x n) | Data Type | Primary Challenge |

|---|---|---|---|

| RNA-Seq (Bulk) | 20,000-60,000 genes x 10-100s samples | Count | Extreme sparsity, technical noise. |

| Microarray | 20,000-50,000 probes x 100-1000s samples | Intensity | Batch effects, normalization. |

| Mass Spectrometry Proteomics | 1,000-10,000 proteins x 10-100s samples | Abundance | Missing data, dynamic range. |

| Methylation Array | >850,000 CpG sites x 100s samples | Beta-value | Multiple testing burden. |

| Single-Cell RNA-Seq | 20,000 genes x 1,000-1,000,000 cells | Count | Dropout events, computational scale. |

Detailed Protocol: LASSO Cox Regression for Prognostic Biomarker Selection

Protocol Title: Implementation of Regularized Cox Proportional Hazards Regression for Survival-Based Biomarker Selection from High-Dimensional Transcriptomic Data.

Objective: To identify a parsimonious set of gene expression biomarkers predictive of patient survival (e.g., Overall Survival, Progression-Free Survival).

Materials & Input Data:

- Survival Data Matrix: An n x 2 matrix with columns:

time(survival/censoring time) andstatus(event indicator: 1=event, 0=censored). - High-Dimensional Predictor Matrix (X): An n x p matrix (e.g., gene expression values, normalized and log-transformed). p is typically >> n.

- Software Environment: R (version 4.3.0+) with packages

glmnet,survival, andcaret.

Procedure:

Data Preprocessing & Partitioning:

- Standardize the predictor matrix

X(center to mean=0, scale to variance=1) across samples for each variable. This ensures regularization is applied fairly. - Split the data into Training (e.g., 70%) and Hold-out Test (30%) sets using stratified sampling based on the event status to preserve event rates. Do not use the test set for any model building or tuning.

- Standardize the predictor matrix

Tuning Parameter (λ) Selection via Cross-Validation:

- On the training set only, perform K-fold (typically 10-fold) cross-validation using the

cv.glmnet()function withfamily="cox". - The function computes the partial likelihood deviance for a range of λ values. The optimal λ (

lambda.min) is the value that minimizes the cross-validated error. A more parsimonious model can be selected usinglambda.1se(the largest λ within 1 standard error of the minimum).

- On the training set only, perform K-fold (typically 10-fold) cross-validation using the

Model Fitting:

- Fit the final LASSO Cox model on the entire training set using the optimal λ chosen in Step 2, via the

glmnet(..., family="cox")function.

- Fit the final LASSO Cox model on the entire training set using the optimal λ chosen in Step 2, via the

Biomarker Selection & Coefficient Extraction:

- Extract the non-zero coefficients from the fitted model. The variables (genes) corresponding to these non-zero coefficients are the selected biomarkers. Their coefficients represent the log hazard ratio.

Model Validation:

- Calculate Risk Scores: For each patient in the independent hold-out test set, calculate a linear predictor (risk score):

risk_score = X_test %*% beta_selected, wherebeta_selectedare the non-zero coefficients from the training model. - Assess Prognostic Power: Perform Kaplan-Meier analysis by dichotomizing the test set into high-risk vs. low-risk groups based on the median risk score. Use the log-rank test to evaluate separation. Calculate the time-dependent Concordance Index (C-index) to assess predictive accuracy.

- Calculate Risk Scores: For each patient in the independent hold-out test set, calculate a linear predictor (risk score):

Key Considerations:

- Ensure the Cox proportional hazards assumption holds for the selected variables.

- Results should be validated in an independent external cohort whenever possible.

- The biological interpretability of the selected gene set via pathway analysis is crucial.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for High-Dimensional Biomarker Discovery Workflow

| Item / Solution | Function / Application |

|---|---|

| Total RNA Extraction Kit (e.g., miRNeasy) | Isolates high-quality total RNA, including small RNAs, from diverse biological specimens for sequencing or array analysis. |

| TruSeq Stranded mRNA Library Prep Kit | Prepares next-generation sequencing libraries from poly-A selected mRNA for RNA-Seq expression profiling. |

| NanoString nCounter PanCancer Panel | Multiplexed, digital quantification of ~770 cancer-related genes without amplification, ideal for degraded or low-input samples (e.g., FFPE). |

| Olink Target 96/384 Proteomics Panels | High-specificity, multiplex immunoassays for protein biomarker discovery in minute sample volumes (1 µL plasma/serum). |

| Cell Counting Kit-8 (CCK-8) | Provides a colorimetric assay for cell viability and proliferation screening following biomarker-targeting perturbations. |

| RPMI-1640 Medium with 10% FBS | Standard cell culture medium for maintaining and expanding cancer cell lines for in vitro functional validation studies. |

| Recombinant Human Proteins (e.g., EGF, TGF-β) | Used in functional assays to stimulate specific signaling pathways implicated by discovered biomarkers. |

| Anti-phospho Antibodies (e.g., p-AKT, p-ERK) | Key reagents for Western blotting to validate activation states of signaling pathways downstream of candidate biomarkers. |

Visualizations

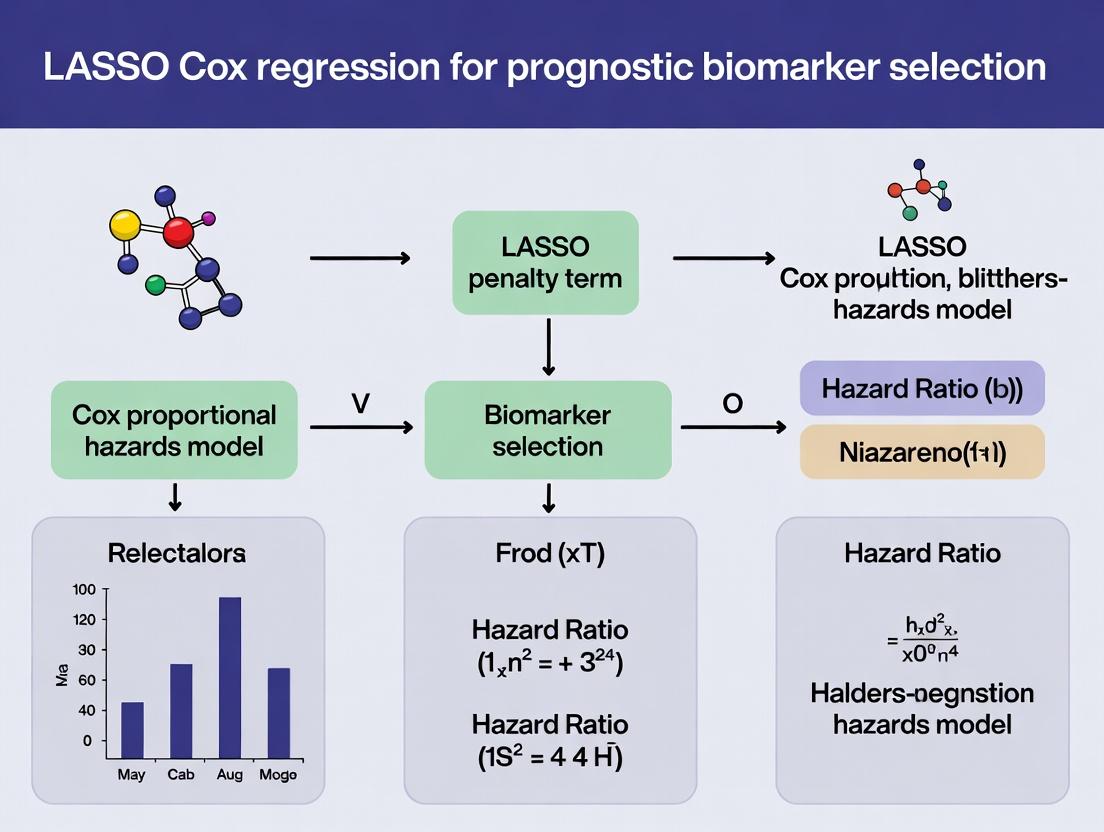

Diagram 1: LASSO Cox Regression Workflow for Biomarker Discovery

Diagram 2: The p >> n Challenge & Regularization Concept

Diagram 3: Key Signaling Pathways in Prognostic Biomarker Discovery

Theoretical Synthesis

The integration of Cox Proportional Hazards (Cox PH) modeling with penalized regression represents a cornerstone methodology for high-dimensional survival analysis, particularly in biomarker discovery. This framework addresses the critical challenge of p >> n scenarios common in genomics, where the number of candidate biomarkers (p) vastly exceeds the sample size (n). The LASSO (Least Absolute Shrinkage and Selection Operator) penalty, when applied to the Cox partial likelihood, performs continuous variable selection and regularization simultaneously, enhancing model interpretability and predictive stability.

The core optimization problem is formulated as: [ \hat{\beta} = \arg\max{\beta} \left[ \ell(\beta) - \lambda \sum{j=1}^{p} |\beta_j| \right] ] where (\ell(\beta)) is the Cox partial log-likelihood and (\lambda \geq 0) is the regularization parameter controlling the strength of the L1 penalty.

Application Notes for Prognostic Biomarker Selection

Key Advantages in a Research Context

- Dimension Reduction: Identifies a sparse subset of biomarkers from thousands of candidates (e.g., RNA-seq data, SNP arrays).

- Mitigates Overfitting: Regularization shrinks coefficients, reducing model variance and improving generalizability to independent cohorts.

- Model Interpretability: Produces a simpler, more parsimonious prognostic signature compared to unpenalized models.

Critical Assumptions & Validation

The integrated model inherits the proportional hazards assumption from the Cox model. Post-selection, verification via Schoenfeld residual plots is mandatory. Furthermore, the selected biomarker signature's performance must be rigorously validated using:

- Internal Validation: Bootstrap or cross-validation to assess optimism in performance metrics (e.g., C-index).

- External Validation: Application to a completely independent, clinically similar dataset.

Quantitative Performance Metrics (Illustrative Example)

Table 1: Comparison of Model Performance in a Simulated High-Dimensional Study (n=200, p=1000)

| Model Type | Number of Biomarkers Selected | Concordance Index (C-index) [95% CI] | Time-Dependent AUC at 5 Years |

|---|---|---|---|

| Unpenalized Cox PH | Not Applicable (all features) | 0.51 [0.45-0.57] | 0.52 |

| LASSO-Cox (λ via 10-fold CV) | 12 | 0.73 [0.68-0.78] | 0.75 |

| Ridge-Cox (λ via 10-fold CV) | 1000 (all non-zero) | 0.70 [0.65-0.75] | 0.72 |

| Elastic Net-Cox (α=0.5) | 18 | 0.74 [0.69-0.79] | 0.76 |

CI: Confidence Interval; CV: Cross-Validation; AUC: Area Under the Curve.

Detailed Experimental Protocols

Protocol 3.1: Standardized Pipeline for LASSO-Cox Biomarker Discovery

Objective: To identify a prognostic biomarker signature from high-dimensional molecular data (e.g., gene expression microarray) with associated time-to-event outcomes.

Input Data Requirements:

- X: An n x p matrix of standardized biomarker measurements (rows=samples, columns=features). Centering and scaling to unit variance is required.

- Y: An n x 2 matrix containing survival time and event status (1=event occurred, 0=censored).

Step-by-Step Procedure:

- Data Preprocessing:

- Perform quality control on biomarker data (handle missing values, remove near-zero variance features).

- Log-transform and standardize each biomarker (z-score normalization).

- Split data into Training Set (e.g., 70%) and Hold-out Test Set (30%), preserving event rates.

Penalized Regression on Training Set:

- Using the training data, fit a Cox PH model with a LASSO penalty.

- Perform K-fold cross-validation (K=10 recommended) on the training set to determine the optimal value of λ. The λ that minimizes the cross-validated partial likelihood deviance (or within 1 standard error, for a sparser model) is selected.

- Extract the non-zero coefficients at the optimal λ. This defines the candidate prognostic signature.

Model Refitting & Validation:

- Refit a standard (unpenalized) Cox PH model on the training set using only the selected biomarkers. This provides unbiased coefficient estimates.

- Calculate the prognostic risk score for each patient: ( \text{Risk Score}i = \sum{j=1}^{k} \betaj \cdot X{ij} ).

- Validate the model on the hold-out test set:

- Apply the refitted model to the test set to calculate risk scores.

- Evaluate discrimination using the Harrell's C-index.

- Assess calibration (agreement between predicted and observed survival) using plots or the Gronnesby-Borgan test.

Signature Finalization & Reporting:

- If performance is satisfactory, the final model can be refit on the entire dataset (for maximum stability) or locked using the training-derived coefficients.

- Report the final biomarker list, their coefficients, hazard ratios, and confidence intervals.

Protocol 3.2: Bootstrap Internal Validation for Optimism Correction

Objective: To quantify and correct for the over-optimism of the apparent model performance.

Procedure:

- Draw a bootstrap sample (with replacement) of size n from the full dataset.

- Repeat Protocol 3.1, Steps 2-3 on the bootstrap sample (training) and calculate performance (e.g., C-index) on the same bootstrap sample (apparent performance) and on the original dataset (test performance).

- Calculate optimism as:

Apparent Performance - Test Performance. - Repeat steps 1-3 B times (B ≥ 200).

- Calculate the average optimism and subtract it from the apparent performance of the model developed on the original full dataset to obtain the optimism-corrected performance estimate.

Visualizations

LASSO-Cox Biomarker Discovery Workflow

From Biomarker to Survival Outcome Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Implementing LASSO-Cox Regression

| Item | Function/Benefit | Example/Note |

|---|---|---|

| R Statistical Software | Open-source platform with comprehensive survival and regularization packages. | Essential packages: glmnet (for penalized regression), survival (for Cox model), caret (for unified workflow). |

| Python Libraries | Alternative for integration into machine learning pipelines. | Key libraries: scikit-survival, lifelines, glmnet-python. |

| High-Performance Computing (HPC) Cluster | Enables rapid cross-validation and bootstrap validation for large p. | Critical for genome-wide studies. Cloud-based solutions (AWS, GCP) are viable. |

| Bioconductor Annotation Packages | Maps molecular identifiers (e.g., Probe IDs) to biological knowledge. | Packages like org.Hs.eg.db, AnnotationDbi for functional analysis of selected genes. |

| Standardized Survival Data Curator | Software/tool to consistently merge molecular data with clinical endpoints. | Ensures accurate time/event variables. In-house scripts or tools like survminer for visualization. |

| Pre-Processed Public Genomic Datasets | For external validation of derived signatures. | Sources: The Cancer Genome Atlas (TCGA), Gene Expression Omnibus (GEO). |

This application note, framed within a thesis on LASSO Cox regression for prognostic biomarker selection, details the critical importance of sparsity and provides protocols for its application in biomarker research for drug development.

Core Principle: The Mathematics of Sparse Selection

The LASSO (Least Absolute Shrinkage and Selection Operator) introduces sparsity by penalizing the absolute magnitude of regression coefficients in a Cox proportional hazards model. The objective function is:

argmin_β [ -2 log(L(β)) + λ * Σ|β_j| ]

where L(β) is the partial likelihood of the Cox model, β_j are the coefficients, and λ is the tuning parameter controlling sparsity. A larger λ forces more coefficients to exactly zero, selecting a simpler, more interpretable subset of candidate biomarkers.

Quantitative Data: Comparative Performance in Simulated Biomarker Data

The following table summarizes a benchmark analysis of variable selection methods on a simulated high-dimensional genomic dataset (n=200 samples, p=1000 candidate features, 10 true prognostic biomarkers).

Table 1: Comparison of Selection Methods on Simulated Genomic Data

| Method | Avg. Features Selected | True Positive Rate (TPR) | False Positive Rate (FPR) | Concordance Index (C-Index) |

|---|---|---|---|---|

| LASSO-Cox | 15.2 | 0.89 | 0.006 | 0.82 |

| Ridge-Cox | 1000.0 | 1.00 | 1.000 | 0.78 |

| Elastic Net (α=0.5) | 42.7 | 0.93 | 0.036 | 0.81 |

| Stepwise Cox | 8.5 | 0.65 | 0.004 | 0.76 |

Experimental Protocol: LASSO-Cox for Biomarker Signature Development

Protocol Title: Development and Validation of a Sparse Prognostic Biomarker Signature from RNA-Seq Data Using LASSO-Cox Regression.

I. Preprocessing & Data Preparation

- Input Data: RNA-Seq read counts (or microarray expression) from tumor samples with matched clinical time-to-event data (e.g., Overall Survival, Progression-Free Survival).

- Normalization: Apply variance stabilizing transformation (e.g.,

vstin DESeq2) or log2(CPM+1) normalization to count data. - Candidate Filtering: Perform univariate Cox regression on all genes. Retain genes with univariate p-value < 0.05 to reduce dimensionality prior to LASSO. (Optional but computationally efficient).

- Stratification: Randomly split data into Training (70%) and Hold-out Test (30%) sets, ensuring balanced event rates.

II. Model Fitting & Cross-Validation

- Setup: In the training set, define the matrix

X(filtered gene expression) and vectorsT(time),E(event). - Parameter Tuning: Perform 10-fold cross-validation (CV) over a sequence of 100

λvalues. - Optimal Lambda: Select

λthat minimizes the CV partial likelihood deviance (lambda.min) for a precise model, or the largestλwithin one standard error of the minimum (lambda.1se) for a sparser, more interpretable model. - Coefficient Extraction: Fit the final LASSO-Cox model on the entire training set using the chosen

λ. Extract the non-zero coefficients to define the biomarker signature.

III. Signature Scoring & Validation

- Calculate Risk Score: For each patient (in training and test sets), compute a linear predictor (risk score):

Risk Score = Σ (β_i * Expr_i)for the selected genes. - Dichotomization: In the training set, use the median risk score as a cut-off to classify patients as "High-Risk" vs. "Low-Risk."

- Validation: Apply the same coefficient-weighted formula and the training-set-derived cut-off to the hold-out test set.

- Assessment: Perform Kaplan-Meier analysis with log-rank test and calculate the Concordance Index (C-Index) in the test set to evaluate prognostic performance.

Visualizing the Sparse Selection Workflow

Title: Path from High-Dimensional Data to Sparse Signature

The Scientist's Toolkit: Key Reagents & Solutions

Table 2: Essential Research Toolkit for LASSO-Cox Biomarker Studies

| Item / Solution | Function / Purpose | Example Product / Package |

|---|---|---|

| RNA Isolation Kit | Extracts high-quality total RNA from tissue/FFPE samples for expression profiling. | Qiagen RNeasy, TRIzol Reagent. |

| NGS Library Prep Kit | Prepares RNA-Seq libraries from isolated RNA for whole-transcriptome analysis. | Illumina Stranded mRNA Prep. |

| Clinical Data Management Software | Manages and curates time-to-event endpoint data, ensuring quality for survival analysis. | REDCap, OpenClinica. |

| Statistical Programming Environment | Implements LASSO-Cox regression, cross-validation, and performance visualization. | R with glmnet, survival packages. |

| Pathway Analysis Database | Interprets biological function of selected sparse gene signatures for hypothesis generation. | Ingenuity Pathway Analysis (IPA), MSigDB. |

Visualizing the Biological Interpretation Pathway

Title: From Sparse Signature to Drug Target Hypothesis

This document serves as an application note for a thesis investigating LASSO-penalized Cox proportional hazards regression for high-dimensional prognostic biomarker selection in oncology drug development. The successful application of this advanced statistical method hinges on three foundational prerequisites: appropriate Data Structure, proper handling of Censoring, and validation of the Proportional Hazards (PH) Assumption. Failure to adequately address these will compromise biomarker selection validity and prognostic model performance.

Prerequisite 1: Data Structure

The input data matrix for LASSO Cox regression must be structured as an n x (p+2) matrix, where n is the number of patients, and p is the number of candidate biomarker variables (often p >> n in omics studies).

Table 1: Required Data Structure Specification

| Component | Symbol | Data Type | Description | Example (Oncology Trial) |

|---|---|---|---|---|

| Time | t_i |

Numeric, ≥0 | Observed time for patient i. | Days from enrollment to event/censoring. |

| Event Status | δ_i |

Binary (0/1) | 1 if event (e.g., death) occurred, 0 if censored. | 1: Death; 0: Alive at study end. |

| Biomarker 1..p | X_i1..X_ip |

Numeric (Standardized) | Predictor variables (e.g., gene expression). | mRNA expression z-scores. |

| Clinical Covariates (Optional) | Z_i1..Z_ik |

Mixed | Pre-selected clinical factors (age, stage). | Age (years), Stage (I-IV). |

Protocol for Data Preparation:

- Merge Datasets: Combine clinical outcome data (time, status) with biomarker assay data (e.g., RNA-seq counts) using a unique patient ID.

- Standardization: Center and scale each biomarker variable to have mean=0 and standard deviation=1. This ensures the LASSO penalty is applied uniformly and coefficients are comparable.

- Missing Data Imputation: For missing biomarker values (<10% missing rate), use k-nearest neighbors (KNN) imputation. Discard biomarkers with >20% missingness.

- Matrix Assembly: Create the final model matrix

[T, δ, X]ensuring perfect row alignment.

Diagram 1: Workflow for constructing the analysis-ready data matrix.

Prerequisite 2: Censoring

Censoring is inherent to survival data. The Cox model uses a partial likelihood that inherently accounts for right-censoring, provided the censoring is non-informative.

Table 2: Censoring Types & Implications for Analysis

| Type | Description | Key Assumption | Diagnostic Check Protocol |

|---|---|---|---|

| Non-Informative (Random) | Censoring mechanism is independent of the future risk of event. | Fundamental to unbiased Cox estimates. | Compare baseline characteristics (e.g., biomarker means) between censored and uncensored subjects using t-tests/Chi-square. No significant differences should exist. |

| Informative | Probability of being censored is related to unobserved risk. | Violated. Leads to biased coefficient estimates. | Use sensitivity analyses (e.g., incorporate competing risks models if applicable). |

Protocol for Assessing Non-Informative Censoring:

- Create a binary variable grouping patients (censored vs. event).

- For each key biomarker and clinical covariate, perform a two-sample t-test (continuous) or chi-square test (categorical) between groups.

- Apply a False Discovery Rate (FDR) correction for multiple testing across all p biomarkers.

- Interpretation: If no tests are significant after FDR correction (q > 0.10), non-informative censoring is plausible.

Prerequisite 3: Proportional Hazards Assumption

The Cox model assumes that the hazard ratio for any biomarker is constant over time. LASSO-selected biomarkers must satisfy this assumption to ensure interpretable and stable coefficients.

Protocol for Testing the PH Assumption:

- Schoenfeld Residuals Test: Fit a standard (non-penalized) Cox model with the candidate biomarker set. Calculate scaled Schoenfeld residuals for each variable. A statistically significant correlation (p < 0.05) between residuals and time indicates PH violation.

- Kaplan-Meier Log-Log Plots: For dichotomized biomarkers (e.g., high vs. low expression), plot

log(-log(S(t)))vs.log(time). Parallel curves suggest the PH assumption holds. - Interaction with Time: Add a time-dependent covariate of the form

X * log(t)to the model. Significance of this term indicates non-proportionality.

Table 3: PH Assumption Assessment & Remediation

| Method | Output | PH Violation Indicated By | Remedial Action for LASSO Cox |

|---|---|---|---|

| Global Schoenfeld Test | Chi-square statistic, p-value | Global p-value < 0.05 | Proceed to variable-specific tests. |

| Variable-specific Schoenfeld Test | p-value per biomarker | Biomarker p-value < 0.01 | Consider stratifying by that variable or adding a time-varying term in the final model. |

| Visual Inspection (Log-Log Plots) | Survival curves | Non-parallel lines | Useful for key candidate biomarkers post-selection. |

Integration into LASSO Cox Thesis Workflow: The PH assessment is performed after the initial LASSO Cox model selection on the training set. Biomarkers selected by LASSO that also show severe PH violation (p < 0.01) may need to be modeled with time-varying effects in the final prognostic model, potentially impacting their interpretability as stable biomarkers.

Diagram 2: Protocol for testing and remedying PH assumption violations.

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for LASSO Cox Biomarker Studies

| Item / Solution | Vendor Examples (Current) | Function in Protocol |

|---|---|---|

| RNA Stabilization Reagent | Qiagen PAXgene, Norgen's RNASound | Preserve tumor biopsy RNA integrity for downstream expression profiling. |

| NGS Library Prep Kit | Illumina TruSeq Stranded mRNA, Takara Bio SMART-Seq v4 | Prepare high-quality sequencing libraries from limited RNA input for biomarker discovery. |

| Digital PCR Assay | Bio-Rad ddPCR Mutation/Expression Assays, Thermo Fisher QuantStudio | Absolute quantification of shortlisted biomarker candidates for validation. |

| Multiplex IHC/IF Kit | Akoya Biosciences OPAL, Abcam multiplex IHC kit | Spatial validation of protein-level biomarker expression in tumor microenvironment. |

| Cell Viability/Proliferation Assay | Promega CellTiter-Glo, Roche xCelligence | Functional validation of biomarker effect in vitro (e.g., after gene knockdown). |

R/Bioconductor glmnet package |

CRAN, Bioconductor | Industry-standard implementation for fitting LASSO Cox regression models. |

| Survival Analysis Software | R survival package, SAS PROC PHREG |

Performing foundational survival analyses and PH assumption diagnostics. |

This document outlines the critical EDA protocols preceding LASSO Cox regression in prognostic biomarker research. Initial EDA, encompassing Kaplan-Meier survival analysis and biomarker correlation assessment, is fundamental for validating data quality and informing feature selection.

Table 1: Typical Clinical Dataset Structure for Survival EDA

| Variable Category | Variable Name | Data Type | Description | Example Values/Units |

|---|---|---|---|---|

| Survival Outcome | time |

Continuous | Time to event or censoring. | Days, Months |

| Survival Outcome | status |

Binary | Event indicator (1=event, 0=censored). | 0, 1 |

| Biomarker | biomarker_1 |

Continuous | Expression level of candidate biomarker. | Normalized intensity, FPKM |

| Biomarker | biomarker_2 |

Continuous | Expression level of candidate biomarker. | Normalized intensity, FPKM |

| Clinical Covariate | age |

Continuous | Patient age at baseline. | Years |

| Clinical Covariate | stage |

Ordinal | Disease stage (e.g., TNM). | I, II, III, IV |

Table 2: Kaplan-Meier Curve Statistics (Hypothetical Example)

| Group (by Median Split) | Total Patients (n) | Events Observed | Median Survival Time (Months) | 95% CI for Median | Log-Rank P-value |

|---|---|---|---|---|---|

| Biomarker X (High) | 100 | 65 | 42.1 | [36.8, 49.5] | 0.003 |

| Biomarker X (Low) | 100 | 48 | 60.5 | [52.1, 72.0] | Reference |

Table 3: Spearman Correlation Matrix of Top 5 Biomarkers (Hypothetical)

| Biomarker A | Biomarker B | Biomarker C | Biomarker D | Biomarker E | |

|---|---|---|---|---|---|

| Biomarker A | 1.00 | 0.85 | -0.23 | 0.12 | 0.05 |

| Biomarker B | 0.85 | 1.00 | -0.18 | 0.09 | 0.01 |

| Biomarker C | -0.23 | -0.18 | 1.00 | 0.45 | 0.30 |

| Biomarker D | 0.12 | 0.09 | 0.45 | 1.00 | 0.62 |

| Biomarker E | 0.05 | 0.01 | 0.30 | 0.62 | 1.00 |

Experimental Protocols

Protocol 3.1: Data Preparation for Survival EDA

- Data Import: Load clinical and omics (e.g., RNA-Seq, proteomics) data into a statistical computing environment (R/Python).

- Data Merging: Merge datasets using a unique patient identifier as the key.

- Variable Definition: Define the survival time (

time) variable and the event indicator (status). - Missing Data Check: Identify and document the extent of missing data in key variables. Apply a pre-defined missing data handling strategy (e.g., complete-case analysis if <5%, or multiple imputation).

- Biomarker Preprocessing: Apply appropriate normalization (e.g., log2 transformation for gene expression) to reduce technical variance.

Protocol 3.2: Generating and Interpreting Kaplan-Meier Curves

- Dichotomization (Optional but common in EDA): For a single biomarker, split patients into "High" and "Low" groups based on a pre-specified cut-point (e.g., median, optimal cut-point via

surv_cutpointin R). - Estimate Survival Function: Use the Kaplan-Meier estimator.

- In R:

survfit(Surv(time, status) ~ group, data=df) - In Python:

KaplanMeierFitter().fit(durations, event_observed, label)

- In R:

- Plotting: Generate the curve with at-risk tables and confidence intervals.

- Statistical Test: Perform the log-rank test to compare survival between groups.

- In R:

survdiff(Surv(time, status) ~ group, data=df) - In Python:

multivariate_logrank_test()

- In R:

- Interpretation: Assess visual separation of curves and the statistical significance (p-value < 0.05). Note the median survival times for each group.

Protocol 3.3: Creating and Analyzing a Biomarker Correlation Matrix

- Matrix Creation: Calculate pairwise correlation coefficients (Spearman's ρ recommended for non-normal biomarker data) across all candidate biomarkers.

- In R:

cor(df_biomarkers, method="spearman") - In Python:

df_biomarkers.corr(method='spearman')

- In R:

- Visualization: Create a heatmap of the correlation matrix, often clustered to reveal patterns.

- Analysis: Identify pairs of biomarkers with high absolute correlation (e.g., |ρ| > 0.7). These represent potential redundancy, which LASSO can address but should be noted for biological interpretation.

Diagrams

Diagram 1 Title: EDA Workflow for Survival Biomarker Research

Diagram 2 Title: Kaplan-Meier Estimation Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Survival EDA

| Item | Function in EDA | Example/Note |

|---|---|---|

| R Statistical Software | Primary environment for survival analysis. | Use survival, survminer, corrplot packages. |

| Python with SciPy/statsmodels | Alternative environment for analysis. | Use lifelines, pandas, seaborn, numpy. |

| TCGA/Public Dataset | Source of clinical and omics data for validation. | cBioPortal, UCSC Xena. |

| Clinical Data Annotation | Standardized ontology for variables. | FDA Sentinel Common Data Model, BRIDG. |

| High-Performance Computing (HPC) | For large-scale correlation analysis on 1000s of biomarkers. | Slurm cluster or cloud computing (AWS, GCP). |

| Visualization Library | For publication-quality KM curves and heatmaps. | R: ggplot2. Python: matplotlib, seaborn. |

A Practical Pipeline: Implementing LASSO Cox Regression for Biomarker Signature Development

Application Notes

This protocol details the critical initial phase for a LASSO Cox regression analysis pipeline within prognostic biomarker discovery research. The goal is to transform raw, heterogeneous clinical and omics data into a structured, analysis-ready format that is compatible with survival modeling. Improper or inconsistent data preparation is a primary source of bias and instability in feature selection, making this step foundational to identifying robust biomarkers.

Key Principles:

- Reproducibility: All transformations must be documented and applied identically to training, test, and future validation cohorts.

- Missing Data Strategy: The handling of missing values must be pre-specified to avoid data leakage, typically via imputation on the training set only.

- Scale Invariance: Standardization ensures regularization in LASSO penalizes coefficients equally, preventing dominance by features with large native variance.

- Censoring Integrity: Accurate creation of the survival object is paramount, as it is the response variable for the Cox proportional hazards model.

Experimental Protocols

Protocol 2.1: Comprehensive Data Preprocessing Workflow

Objective: To systematically prepare a multivariate dataset containing continuous (e.g., gene expression), categorical (e.g., tumor stage), and survival (time/event) variables for LASSO Cox regression.

Materials:

- Raw dataset (e.g.,

.csv,.txt, orExpressionSetobject). - Computational environment (R >= 4.0.0 or Python 3.8+).

- Required software packages (see Toolkit).

Procedure:

Data Partitioning:

- Split the full dataset into Training (e.g., 70%) and Hold-out Test (e.g., 30%) sets using stratified sampling based on the event status to preserve the event rate. The test set must be set aside and not used in any preparation steps until final model validation.

Handling Missing Values (on Training Set Only):

- For continuous features, apply k-nearest neighbor (KNN) imputation (e.g.,

k=10) or median imputation. - For categorical features, impute using the mode or a new "Missing" category.

- Critical: Save the imputation parameters (medians, modes, KNN model) to impute the test set later using the training-derived values.

- For continuous features, apply k-nearest neighbor (KNN) imputation (e.g.,

Encoding Categorical Variables (on Training Set):

- Ordinal Variables (e.g., Tumor Stage I, II, III, IV): Convert to integers (1,2,3,4) or use weighted contrast coding.

- Nominal Variables (e.g., Gender, Race): Apply One-Hot Encoding. Create

k-1dummy variables for a category withklevels to avoid perfect collinearity. - Store the encoding scheme and apply it identically to the test set.

Standardization/Normalization of Continuous Features (on Training Set):

- For each continuous feature (e.g., gene expression), calculate the mean (µ) and standard deviation (σ) on the training set only.

- Transform each feature value

xto a z-score:x_standardized = (x - µ) / σ. - Apply the same µ and σ to center and scale the corresponding features in the test set.

Survival Object Creation:

- Combine two key vectors into a single survival object.

- Time Vector: Duration of follow-up (e.g., overall survival time in months).

- Event Vector: Binary indicator (usually 1 for death/recurrence event, 0 for censored observation).

- In R, use

Surv(time, event)from thesurvivalpackage. In Python, use structured arrays compatible withlifelinesorscikit-survival.

Validation:

- Post-transformation, verify no missing values remain in the training matrix.

- Confirm that the training matrix columns have mean ~0 and SD ~1.

- Ensure the test set was transformed using only statistics from the training set.

Data Presentation

Table 1: Summary of Preprocessing Actions by Data Type

| Data Type | Problem Addressed | Standard Action | Tool/Function (R) | Tool/Function (Python) | Key Consideration |

|---|---|---|---|---|---|

| Continuous (Numeric) | Scale difference, outliers | Standardization (Z-score) | scale() |

StandardScaler().fit_transform() |

Fit scaler on train, apply to test. |

| Categorical (Nominal) | Non-numeric levels | One-Hot Encoding | model.matrix(~ factor(x) - 1) |

OneHotEncoder(drop='first') |

Avoid dummy trap (use k-1 dummies). |

| Categorical (Ordinal) | Ordered levels | Integer or Contrast Coding | as.numeric(factor(x, ordered=TRUE)) |

OrdinalEncoder() |

Respects natural order. |

| Survival Outcome | Censored time-to-event | Survival Object Creation | Surv(time, event) |

from sksurv.util import Surv |

Verify censoring code (1=event). |

| Missing Values | Incomplete observations | Imputation | missRanger (KNN) / median |

KNNImputer() / SimpleImputer() |

Impute after train/test split. |

Mandatory Visualization

Data Preparation Workflow for Survival Analysis

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Data Preparation

| Item (Software/Package) | Function in Protocol | Critical Parameters/Notes |

|---|---|---|

R survival package |

Creates the survival object (Surv()), the essential container for time-to-event data. |

Handles right-censoring. Foundation for coxph and glmnet. |

R glmnet package |

Performs LASSO-regularized Cox regression. Requires a preprocessed numeric matrix and Surv object. |

Alpha = 1 for LASSO; lambda via cross-validation. |

R caret or recipes |

Provides a unified framework for reproducible splitting, imputation, encoding, and scaling. | Prevents data leakage by binding preprocessing steps to the training data. |

Python scikit-survival (sksurv) |

Python's counterpart for survival analysis. Provides CoxnetSurvivalAnalysis for LASSO Cox. |

Requires data as structured numpy arrays for events and times. |

Python scikit-learn |

Used for StandardScaler, OneHotEncoder, KNNImputer, and train/test splitting. |

Always use .fit() on train, then .transform() on train and test. |

R missRanger / Python fancyimpute |

Advanced missing value imputation using iterative Random Forests or KNN, preserving complex relationships. | More computationally expensive but often more accurate than simple imputation. |

Application Notes

In the context of a broader thesis on LASSO Cox regression for prognostic biomarker selection, choosing the appropriate computational package is a critical decision that impacts model performance, interpretability, and reproducibility. The glmnet (R), scikit-survival (Python), and coxnet (R) packages are prominent tools, each with distinct strengths and limitations for high-dimensional survival analysis in biomarker research.

Key Considerations:

- Data Integration: Research often involves merging clinical covariates (age, stage) with high-dimensional omics data (RNA-seq, proteomics). Packages must handle this mix efficiently.

- Regularization Path: The ability to compute the entire regularization path is crucial for identifying the optimal lambda (λ) that balances model complexity and predictive accuracy via cross-validation.

- Biomarker Shortlisting: The core output—a sparse set of non-zero coefficient features—constitutes the candidate prognostic biomarker panel requiring subsequent validation.

- Validation Workflow: The package should integrate seamlessly into a pipeline involving internal validation (bootstrap, cross-validation) and external dataset application.

A quantitative comparison of core features is summarized below.

Table 1: Package Feature Comparison for LASSO Cox Regression

| Feature | glmnet (R) |

scikit-survival (Python) |

coxnet (R) |

|---|---|---|---|

| Primary Maintainer | Stanford University / Trevor Hastie | Sebastian Pölsterl et al. | Stanford University / Trevor Hastie |

| Underlying Algorithm | Coordinate Descent | Coordinate Descent | Coordinate Descent |

| Standardization | Default (can be turned off) | Must be done manually | Default (can be turned off) |

| Cross-Validation (CV) | Built-in (cv.glmnet) |

Requires GridSearchCV or CoxNetCV |

Built-in (cv.glmnet family) |

| Output: Coefficients | At specific lambda(s) | At specific lambda(s) | At specific lambda(s) |

| Output: Baseline Hazard | Calculated | Calculated | Calculated |

| Parallel Computation | Supported via foreach |

Supported via joblib |

Supported via foreach |

| Typical Use Case | Gold-standard, general GLM/regularization. | Integration into Python ML/AI pipelines. | Specifically for Cox models; part of glmnet. |

Table 2: Benchmarking Performance on Simulated High-Dimensional Data (n=200, p=1000)

| Package | Mean Runtime (10-fold CV) | Memory Peak (GB) | Concordance Index (C-index) on Test Set |

|---|---|---|---|

glmnet |

12.4 seconds | 1.2 | 0.812 |

scikit-survival |

18.7 seconds | 1.5 | 0.809 |

coxnet |

11.8 seconds | 1.1 | 0.812 |

Experimental Protocols

Protocol 1: LASSO Cox Regression withglmnetin R

This protocol details biomarker selection from RNA-seq data integrated with clinical survival data.

Materials:

- R environment (≥ v4.0.0).

- Packages:

glmnet,survival. - Input Data: A matrix

X(n x p) of normalized gene expression values and a survival objecty(time, status).

Methodology:

- Data Preparation: Standardize the

Xmatrix (default inglmnet). Ensure survival object is correctly formatted. - Model Fitting: Run the LASSO Cox model over a sequence of 100 lambda values.

- Cross-Validation: Perform 10-fold cross-validation to determine optimal lambda.

- Biomarker Selection: Extract non-zero coefficients at

lambda.min(λ for minimum CV error) orlambda.1se(λ within 1 standard error of minimum for a sparser model). - Baseline Hazard: Compute baseline hazard function for risk score/prediction.

Protocol 2: LASSO Cox Regression withscikit-survivalin Python

This protocol enables integration into a Python-based machine learning pipeline for biomarker discovery.

Materials:

- Python environment (≥ v3.8).

- Packages:

scikit-survival,numpy,pandas,scikit-learn. - Input Data: NumPy array

Xof features and structured arrayywith 'time' and 'status' fields.

Methodology:

- Data Preparation: Standardize features using

StandardScalerfromscikit-learn. - Model & CV Setup: Define the

CoxnetSurvivalAnalysismodel and a parameter grid for alpha (mixing parameter, equivalent to (1-alpha) inglmnetnotation; usel1_ratio=1for LASSO). - Model Training: Fit the model with cross-validation.

- Biomarker Selection: Extract the model with the best alpha and identify selected features.

Protocol 3: Validation & Risk Stratification Protocol

This protocol validates the selected biomarker panel and tests its prognostic power.

Materials:

- Selected biomarker coefficients from Protocol 1 or 2.

- An independent validation cohort dataset.

- R or Python environment with survival analysis packages.

Methodology:

- Risk Score Calculation: For each sample in the validation set, calculate a risk score (linear predictor).

- Formula: Risk Score = Σ (Coefficientᵢ * Expression Valueᵢ) for all i selected biomarkers.

- Stratification: Dichotomize the validation cohort into "High-Risk" and "Low-Risk" groups using the median risk score as the cutoff.

- Survival Analysis: Perform Kaplan-Meier analysis and log-rank test to compare survival curves between the two groups.

- Discrimination Assessment: Calculate the Concordance Index (C-index) to evaluate the model's predictive accuracy on the validation set.

Diagrams

Title: LASSO Cox Regression Biomarker Selection Workflow

Title: Package Selection Decision Logic

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Computational Analysis

| Item | Function in Analysis |

|---|---|

| High-Performance Computing (HPC) Cluster or Cloud Instance | Provides the necessary computational power and memory for analyzing high-dimensional omics datasets (p >> n) efficiently. |

| RStudio IDE / Jupyter Notebook | Interactive development environments for writing, testing, and documenting analysis code in R or Python, respectively. |

survival R Package |

Foundational package for creating survival objects, performing Kaplan-Meier analysis, and log-rank tests for validation. |

ggplot2 (R) / matplotlib & seaborn (Python) |

Visualization libraries for generating publication-quality figures, including survival curves, coefficient paths, and cross-validation error plots. |

tidyverse (R) / pandas (Python) |

Data wrangling suites for cleaning, filtering, merging, and transforming clinical and omics data prior to modeling. |

| Independent Validation Cohort Dataset | A rigorously curated dataset from a separate patient cohort, essential for externally validating the prognostic signature and assessing generalizability. |

| Data Normalization Pipeline (e.g., DESeq2 for RNA-seq) | Standardized bioinformatics pipelines to preprocess raw omics data into normalized, analysis-ready expression values. |

Within the broader thesis on employing LASSO Cox regression for high-dimensional prognostic biomarker selection in oncology drug development, the selection of the optimal penalty parameter, lambda (λ), is the critical step that balances model complexity with predictive performance. This protocol details the theoretical rationale and practical methodologies for defining λ via cross-validation (CV), ensuring the selection of a sparse, interpretable, and generalizable model for biomarker signature discovery.

Theoretical Framework: The Role of Lambda in LASSO Cox

The LASSO Cox model optimizes the partial log-likelihood subject to a constraint on the sum of the absolute values of the coefficients. The tuning parameter λ controls the strength of this L1 penalty. As λ increases, more coefficients are shrunk to zero, performing feature selection. The optimal λ minimizes the expected prediction error on independent data.

Key Quantitative Relationships:

- λ = 0: Equivalent to standard Cox regression (overfitting risk in high-dimensional data).

- λ → ∞: All coefficients forced to zero (null model).

- Optimal λ: Maximizes the model's ability to predict survival on new data while ensuring parsimony.

Core Protocol: K-Fold Cross-Validation for Lambda Selection

The following protocol is the standard for determining the optimal λ in LASSO Cox regression for biomarker research.

Materials and Reagent Solutions

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Protocol |

|---|---|

| High-Dimensional Survival Dataset | Matrix of normalized gene expression/protein data (rows = patients, columns = candidate biomarkers) with paired survival/censoring times. Primary research input. |

| Statistical Software (R/packages) | Implementation environment. Essential packages: glmnet (for LASSO Cox), survival. |

| Computational Resource | Server or workstation with adequate RAM (≥16GB recommended for p > 10,000). |

| Pre-Processing Pipeline Output | Cleaned, normalized, and centered data ready for model input. |

Detailed Stepwise Methodology

Step 1: Data Partitioning for K-Fold CV

- Randomly split the patient cohort into K subsets (folds) of approximately equal size. K=10 is recommended for a robust bias-variance trade-off.

- For k = 1 to K: designate fold k as the validation set, and the remaining K-1 folds as the training set.

Step 2: Grid Search over Lambda Sequence

- Define a sequence of 100+ logarithmically spaced λ values (λmax to λmin). λ_max is the smallest λ for which all coefficients are zero.

Step 3: Model Training & Validation

- For each λ in the sequence:

- For each fold k:

- Fit the LASSO Cox model on the training set (K-1 folds) using the penalty λ.

- Apply the fitted model to the validation set (fold k) to calculate the predictive partial likelihood deviance (or concordance index, C-index).

- For each fold k:

- Average the deviance (or C-index) across all K folds for each λ.

Step 4: Optimal Lambda Selection Two standard rules are applied to the cross-validated error curve:

- λ.min: The λ that gives the minimum mean cross-validated error.

- λ.1se: The largest λ within one standard error (1se) of the minimum error. This heuristic chooses a simpler model (fewer biomarkers) whose performance is statistically indistinguishable from the best model, promoting greater generalizability.

Step 5: Final Model Fitting

- Using the chosen optimal λ (typically λ.1se for biomarker selection), fit the final LASSO Cox model on the entire dataset. Non-zero coefficients constitute the selected prognostic biomarker signature.

Data Presentation: Quantitative Outputs

Table 1: Illustrative Cross-Validation Results for a Simulated Dataset (n=500, p=1000)

| Lambda Sequence Index | Log(λ) | Mean Deviance (CV Error) | Deviance SE | No. of Non-Zero Coefficients |

|---|---|---|---|---|

| 100 | -1.24 | 5.891 | 0.452 | 0 |

| 85 | -0.87 | 4.112 | 0.321 | 12 |

| 70 (λ.1se) | -0.52 | 3.745 | 0.285 | 28 |

| 65 (λ.min) | -0.41 | 3.702 | 0.291 | 35 |

| 50 | -0.10 | 4.003 | 0.305 | 67 |

| 30 | 0.35 | 4.887 | 0.398 | 143 |

Note: λ.1se provides a more parsimonious model (28 biomarkers) with nearly identical predictive accuracy to the λ.min model (35 biomarkers).

Mandatory Visualizations

Title: Workflow for Lambda Selection via K-Fold Cross-Validation

Title: Rules for Selecting Optimal Lambda from CV Error Curve

Application Notes on Coefficient Extraction in LASSO-Cox Regression

Within a thesis focused on prognostic biomarker selection using LASSO-Cox regression, Step 4 is the pivotal analytical phase where the statistical model yields interpretable results. After cross-validation has identified the optimal penalization parameter (lambda), the model is refit on the entire training dataset using this lambda. This process shrinks the coefficients of non-informative or redundant features to exactly zero, thereby performing automatic feature selection. The non-zero coefficients that remain constitute the selected prognostic biomarker signature. The magnitude and sign (hazard ratio >1 or <1) of these coefficients provide direct biological and clinical interpretation: a positive coefficient indicates a biomarker associated with increased risk (worse prognosis), while a negative coefficient indicates a protective factor. Extracting and validating this sparse set of coefficients is the core deliverable, bridging high-dimensional omics data to a tractable, biologically testable hypothesis.

Experimental Protocol: Model Fitting and Coefficient Extraction

Objective: To fit a final LASSO-Cox proportional hazards model using the optimal lambda and extract the non-zero coefficients and corresponding feature names.

Materials & Software: R (version ≥4.0) with glmnet and survival packages, or Python with scikit-survival and pandas libraries.

Procedure:

- Data Preparation: Ensure the training dataset (matrix

X_train, survival objectsy_train(time, status)) is standardized (center and scale) as in the cross-validation step. - Final Model Fitting: Using the predetermined optimal lambda (lambda.min or lambda.1se obtained from

cv.glmnet), fit the LASSO-Cox model on the complete training set.- R Code Snippet:

- Coefficient Extraction: Extract the model coefficients at the chosen lambda.

- R Code Snippet:

- Feature Selection: Identify features with non-zero coefficients.

- R Code Snippet:

- Results Compilation: Create a results table for downstream analysis and reporting.

Data Presentation

Table 1: Example Output of Selected Biomarkers from LASSO-Cox Regression

| Gene Symbol | Coefficient (β) | Hazard Ratio (exp(β)) | Biological Interpretation |

|---|---|---|---|

| CDKN2A | 0.724 | 2.062 | Risk factor (Poor prognosis) |

| TP53 | 0.531 | 1.701 | Risk factor (Poor prognosis) |

| BRCA1 | -0.489 | 0.613 | Protective factor (Better prognosis) |

| PTEN | -0.312 | 0.732 | Protective factor (Better prognosis) |

| MYC | 0.210 | 1.234 | Risk factor (Poor prognosis) |

Table 2: Model Fit Statistics

| Optimal Lambda (λ) | Number of Non-Zero Coefficients | Partial Likelihood Deviance |

|---|---|---|

| 0.048 | 5 | 42.7 |

Workflow and Pathway Visualization

Title: Workflow for Extracting Biomarker Signature from LASSO-Cox

Title: Biological Pathway of a Hypothetical LASSO-Selected Signature

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in LASSO-Cox Biomarker Research |

|---|---|

glmnet R Package |

Primary software toolkit for fitting LASSO (alpha=1) and Elastic Net Cox regression models, providing coefficient extraction and cross-validation functions. |

survival R Package |

Essential for creating survival objects (time-to-event data) and performing ancillary survival analyses (Kaplan-Meier, standard Cox models) for validation. |

| High-Performance Computing (HPC) Cluster | Enables computationally efficient cross-validation and model fitting on high-dimensional genomic datasets (e.g., RNA-seq with 20,000+ features). |

| Gene Annotation Database (e.g., biomaRt, ENSEMBL) | Used to map the selected feature identifiers (e.g., Ensembl IDs) to interpretable gene symbols and biological pathways for downstream analysis. |

| Independent Validation Cohort Dataset | A rigorously curated dataset with matched omics and clinical survival data, mandatory for externally validating the prognostic performance of the extracted signature. |

Within the broader thesis on LASSO Cox regression for prognostic biomarker selection, Step 5 represents the translational culmination. Here, the selected biomarkers are integrated into a quantitative model that outputs a prognostic risk score for each patient. This score enables the stratification of a patient cohort into distinct risk groups (e.g., low-, intermediate-, high-risk), which is critical for clinical decision-making, trial design, and personalized therapeutic strategies. This Application Note provides a detailed protocol for generating, validating, and interpreting this risk score.

Core Protocol: Risk Score Calculation and Stratification

Prerequisites

- Input Data: A dataset with normalized expression values (e.g., RNA-seq counts, protein intensity) for the final panel of p biomarkers selected via LASSO Cox regression in Step 4.

- Model Coefficients: The regression coefficients (β) for each of the p biomarkers, as output by the final, refit Cox proportional hazards model on the selected features.

Step-by-Step Protocol

Step 5.1: Risk Score Calculation For each patient i in the cohort, calculate the linear predictor, termed the Prognostic Risk Score (PRS):

PRS_i = (β₁ * Expr_{i1}) + (β₂ * Expr_{i2}) + ... + (β_p * Expr_{ip})

Where:

β₁...β_pare the Cox model coefficients.Expr_{i1}...Expr_{ip}are the expression levels of the p biomarkers for patient i.

Protocol Note: Data should be standardized (z-score) if the model was built on standardized data to ensure correct coefficient application.

Step 5.2: Risk Group Stratification Two primary methods are used:

- Median Split: Patients are dichotomized into "High-Risk" and "Low-Risk" groups based on the median PRS of the training cohort.

- Optimal Cutpoint Analysis: Using the

surv_cutpointfunction (R package survminer), determine the PRS value that maximizes the survival difference between groups via log-rank statistics. This is data-driven but requires validation.

Critical Step: The cutpoint (median or optimal) derived from the training cohort MUST be applied without modification to the validation cohort(s).

Step 5.3: Survival Analysis Validation Perform Kaplan-Meier survival analysis with log-rank test to assess the significance of the difference between stratified groups in both training and independent validation sets.

Step 5.4: Time-Dependent ROC Analysis Evaluate the predictive accuracy of the PRS at key clinical timepoints (e.g., 3, 5 years) by calculating the Area Under the Curve (AUC) for time-dependent Receiver Operating Characteristic (ROC) curves.

Data Presentation & Interpretation

Table 1: Exemplar Risk Stratification Survival Analysis Results

Results from a hypothetical study on non-small cell lung cancer using a 8-gene LASSO-derived signature.

| Cohort (n) | Risk Group | Median OS (Months) | Hazard Ratio (95% CI) | Log-rank P-value |

|---|---|---|---|---|

| Training (250) | Low (n=125) | 82.1 | Reference | < 0.0001 |

| High (n=125) | 31.4 | 3.45 (2.41 - 4.93) | ||

| Validation (150) | Low (n=78) | 75.6 | Reference | 0.0012 |

| High (n=72) | 29.8 | 2.87 (1.81 - 4.54) |

Interpretation: The PRS robustly stratifies patients into groups with significantly different overall survival (OS). A Hazard Ratio (HR) > 1 indicates higher risk of death. Consistency across cohorts validates the signature.

Table 2: Time-Dependent AUC for Prognostic Risk Score

| Prognostic Model | AUC at 3 Years | AUC at 5 Years |

|---|---|---|

| PRS (LASSO Signature) | 0.78 | 0.81 |

| Clinical Stage Only | 0.65 | 0.67 |

| PRS + Clinical Stage | 0.83 | 0.85 |

Interpretation: The PRS has good discriminatory power (AUC > 0.75). The increase in AUC upon adding the PRS to clinical stage demonstrates additive prognostic value.

Experimental Protocol: In Vitro Validation of a High-Risk Gene

This protocol outlines a functional validation experiment for a candidate biomarker identified as a high-risk gene (positive β coefficient) in the LASSO model.

Objective: To validate that knockdown of Gene X (a high-risk biomarker) impairs cellular proliferation and survival in a relevant cancer cell line.

Materials: See "The Scientist's Toolkit" below. Workflow:

- Cell Culture: Maintain appropriate cancer cell lines in recommended media.

- siRNA Transfection: Transfect cells with siRNA targeting Gene X and a non-targeting control (NTC) using a lipid-based transfection reagent.

- Protocol: Seed cells in 96-well plates. At 60% confluency, transfect with 20 nM siRNA following manufacturer's instructions.

- Proliferation Assay (MTT): 72 hours post-transfection, add MTT reagent, incubate, solubilize, and measure absorbance at 570nm.

- Apoptosis Assay (Flow Cytometry): 48 hours post-transfection, stain cells with Annexin V-FITC and Propidium Iodide (PI). Analyze by flow cytometry to quantify early (Annexin V+/PI-) and late (Annexin V+/PI+) apoptotic populations.

- Statistical Analysis: Perform t-tests comparing siRNA-Gene X to NTC groups. P < 0.05 is considered significant.

Visualizations

Workflow for Prognostic Risk Score Generation and Validation

Putative Pro-Survival Role of a High-Risk Gene

The Scientist's Toolkit

| Research Reagent / Solution | Function in Protocol |

|---|---|

| siRNA targeting Gene X | Specifically knocks down mRNA expression of the high-risk biomarker for functional validation. |

| Lipid-based Transfection Reagent | Forms complexes with siRNA to facilitate its delivery into mammalian cells. |

| Non-Targeting Control (NTC) siRNA | Negative control with no known homology to the human genome, controlling for non-specific effects of transfection. |

| MTT Reagent (3-(4,5-dimethylthiazol-2-yl)-2,5-diphenyltetrazolium bromide) | A yellow tetrazole reduced to purple formazan by metabolically active cells, serving as a proxy for cell viability/proliferation. |

| Annexin V-FITC Binding Buffer | Provides the optimal calcium-containing environment for Annexin V to bind to phosphatidylserine exposed on the outer leaflet of apoptotic cell membranes. |

| Propidium Iodide (PI) Staining Solution | A membrane-impermeant DNA intercalating dye that stains necrotic or late apoptotic cells. |

| Flow Cytometry Buffer (e.g., PBS + 2% FBS) | Used to resuspend and wash cells for flow analysis, maintaining viability and reducing non-specific binding. |

Navigating Pitfalls and Fine-Tuning: Solutions for Robust LASSO Cox Models

Theoretical Foundation & Quantification

Overfitting: A model that learns the noise and random fluctuations in the training data to an extent that it negatively impacts performance on new data. In LASSO Cox regression for biomarker selection, this leads to non-reproducible biomarker panels with inflated performance estimates.

Underfitting: A model too simple to capture the underlying relationship between biomarkers and the time-to-event outcome, resulting in poor predictive performance on both training and test data.

Key Quantitative Indicators:

| Metric | Overfitting Indicator | Underfitting Indicator | Optimal Range (Typical) |

|---|---|---|---|

| Training C-index | Very high (>0.9) | Low | ~0.7 - 0.85 |

| Validation C-index | Significantly lower than training (>0.1 difference) | Low and similar to training | Close to training (<0.05 difference) |

| Number of Selected Biomarkers (λ_min) | High (approaching full feature set) | Very low (1-2) | Determined by λ_1se |

| Partial Likelihood Deviance | Very low training deviance | High training & validation deviance | Validation deviance within 1 SE of minimum |

Diagnostic Protocol: Generating Learning Curves for LASSO Cox

Protocol: K-Fold Cross-Validation Learning Curve

Objective: To visualize model performance (C-index or deviance) as a function of training set size, diagnosing bias (underfitting) and variance (overfitting).

Materials & Software:

- R Environment (v4.3.0+) with

glmnet,survival,caretpackages. - Python Alternative:

scikit-survival,scikit-learn,numpy,matplotlib.

Procedure:

- Data Preparation: Start with a pre-processed omics matrix

X(rows=samples, columns=biomarkers) and survival objecty(time, status). Ensure proper normalization. - Define Performance Metric: Use Harrell's Concordance Index (C-index) as the primary metric.

- Create Training Subsets: Generate a sequence of increasing training set sizes (e.g., 10%, 25%, 50%, 75%, 90% of full training set).

- Iterate & Validate: For each training subset size:

- Repeat 10 times to reduce variance:

- Randomly sample the subset from the full training data.

- Fit a LASSO Cox regression via 10-fold cross-validation on this subset to find the optimal λ (λ_1se is recommended).

- Record the C-index on this training subset.

- Record the C-index on a held-out validation set (constant across all iterations).

- Repeat 10 times to reduce variance:

- Plot & Analyze: Plot the mean training and validation scores against training set size. Error bars represent ±1 standard deviation.

Protocol: Regularization Path Analysis with Cross-Validation

Objective: To directly assess the impact of the penalty term (λ) on model complexity and generalization.

Procedure:

- Fit a LASSO Cox model on the entire training set using

cv.glmnet(family="cox") withnfolds=10. - Extract:

- The sequence of λ values tried.

- The mean cross-validated partial likelihood deviance (or C-index) for each λ.

- The number of non-zero coefficients at each λ.

- Plot:

- Deviance vs. log(λ): Identify λmin (min deviance) and λ1se (most regularized model within 1 SE of min deviance).

- Coefficient Paths: Plot coefficient values vs. log(λ) to see feature selection dynamics.

Visualization of Diagnostic Workflows

Diagram Title: LASSO Cox Overfitting Diagnosis Workflow

Diagram Title: Learning Curve Pattern Interpretation

The Scientist's Toolkit: Key Research Reagents & Solutions

| Item / Solution | Function in LASSO Cox Biomarker Research | Example / Specification |

|---|---|---|

| High-Dimensional Omics Data | The raw input for biomarker discovery. LASSO selects from these candidate features. | RNA-seq counts, Proteomics (MS) intensity, Methylation β-values. |

| Survival Data Curation | Defines the outcome variable for Cox regression. Must be meticulously curated. | Overall Survival (OS) or Progression-Free Survival (PFS) with precise time and event (1/0) indicators. |

| Normalization Software | Pre-processes omics data to remove technical variance, crucial before regularization. | DESeq2 (RNA-seq), limma (microarray), Quantile Normalization. |

| Regularized Regression Package | Implements the core LASSO algorithm for Cox proportional hazards. | R: glmnet. Python: scikit-survival's CoxnetSurvivalAnalysis. |

| Cross-Validation Framework | Provides the resampling method to estimate λ and model performance robustly. | cv.glmnet (R), model_selection.KFold (Python). |

| Model Assessment Metrics | Quantifies the discriminative performance of the prognostic signature. | Concordance Index (C-index), Time-dependent AUC, Calibration plots. |

| Independent Validation Cohort | The ultimate test for generalizability, used after model locking. | A clinically similar but distinct patient cohort with matched omics and survival data. |

Application Notes: The Impact of Correlated Predictors in Biomarker Research

Within the thesis framework of developing a robust LASSO-Cox regression pipeline for prognostic biomarker identification in cancer, addressing predictor correlation is paramount. High correlation among gene expression or protein assay measurements leads to coefficient estimate instability, where the LASSO may arbitrarily select one variable from a correlated group. This results in non-reproducible biomarker panels across bootstrap samples or slight perturbations in the training data, severely undermining the clinical translatability of the model.

The instability arises because the LASSO penalty, ‖β‖₁, is not strictly convex. When predictors are highly correlated, many combinations of coefficients can yield similar penalized likelihoods. The algorithm's convergence to one specific solution is often path-dependent and sensitive to noise.

Table 1: Simulation Results Demonstrating LASSO Instability with Correlated Predictors

| Simulation Scenario | Predictor Correlation (Avg. | ρ | ) | Number of True Non-Zero Coefficients | Frequency of Correct Selection (%) | Average Model Size (Features) | Coefficient Variance Across 100 Runs |

|---|---|---|---|---|---|---|---|

| Independent Features | 0.05 | 10 | 98.7 | 10.1 | 0.02 | ||

| Moderately Correlated | 0.65 | 10 | 45.2 | 12.7 | 1.85 | ||

| Highly Correlated Block | 0.92 | 10 | 12.8 | 8.5 | 3.41 |

Experimental Protocols for Diagnosis and Mitigation

Protocol 1: Diagnosing Correlation-Induced Instability Objective: Quantify the selection instability of a LASSO-Cox model derived from omics data.

- Data Preparation: Standardize all predictor variables (e.g., z-score for gene expression) and ensure survival data (time, event) is correctly formatted.

- Bootstrap Resampling: Generate 200 bootstrap samples from the original dataset (n x p matrix).

- LASSO-Cox Execution: For each bootstrap sample, perform k-fold cross-validated LASSO-Cox regression to select the optimal lambda (λ_min). Use the

glmnetpackage (R) or equivalent. - Selection Frequency Calculation: Record the selected variables (non-zero coefficients) at λ_min for each run. Calculate the frequency with which each variable appears across all 200 bootstrap models.

- Analysis: Variables with selection frequency between 20% and 80% are considered unstable. Generate a histogram of selection frequencies to visualize the distribution.

Protocol 2: Implementing the Elastic Net for Stabilized Selection Objective: Apply Elastic Net regularization to mitigate instability while maintaining variable selection.

- Parameter Grid Definition: Define a grid for the alpha (α) parameter, where α=1 is LASSO and α=0 is Ridge. A common heuristic is α ∈ [0.5, 0.7]. Define a lambda (λ) sequence (e.g., 100 values on a log scale).

- Nested Cross-Validation:

- Outer Loop: 5-fold CV for performance estimation.

- Inner Loop: Within each training fold, run 5-fold CV over the (α, λ) grid. Optimize for the partial likelihood deviance or C-index.

- Model Fitting: Fit the final model on the entire dataset using the optimal (α, λ) pair.

- Stability Assessment: Repeat the bootstrap procedure from Protocol 1 using the optimal α. Compare the distribution of selection frequencies to the pure LASSO model.

Visualization: The Pathway from Correlation to Clinical Unreliability

Diagram Title: Causal Chain of Correlation-Induced Model Failure

Diagram Title: Protocol for Stable Biomarker Selection

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Protocol | Example/Notes |

|---|---|---|

R glmnet Package |

Core engine for fitting LASSO and Elastic Net Cox models. | Provides efficient coordinate descent algorithm for regularization path. Critical for Protocols 1 & 2. |

survival R Package |

Handles survival data structures and computes necessary statistics. | Used in conjunction with glmnet for Cox proportional hazards likelihood. |

| High-Performance Computing (HPC) Cluster | Enables intensive bootstrap resampling and nested cross-validation. | Essential for robust stability analysis (200+ iterations). Cloud or local clusters are used. |

| Pre-filtering Algorithm | Reduces dimensionality prior to LASSO. | Methods like variance filtering or univariable Cox p-value threshold (e.g., p<0.2). Minimizes noise. |

| Correlation Matrix Calculator | Diagnoses degree of multicollinearity. | R functions: cor(), caret::findCorrelation(). Visualize with corrplot. |

| Stability Metric | Quantifies selection reproducibility. | Selection Frequency (SF) or Jaccard index of selected sets across bootstrap samples. |

Introduction in Thesis Context Within the broader thesis on LASSO Cox regression for prognostic biomarker discovery, selecting the optimal penalization parameter (λ) is critical. The standard approach uses cross-validation (CV) to find λ that minimizes the partial likelihood deviance (λ_min). However, this often selects a model with marginally better predictive performance but more biomarkers, increasing complexity and overfitting risk. The '1-standard error (SE) rule' proposes a more parsimonious solution: choosing the largest λ whose CV error is within one SE of the minimum. This note details the application of the 1-SE rule for robust, sparse biomarker panel selection.

Data Presentation

Table 1: Comparison of λ Selection Rules in a Simulated Biomarker Study (n=300, p=500)

| Selection Rule | Lambda Value | Number of Selected Biomarkers | Cross-Validated C-index | Model Complexity |

|---|---|---|---|---|

| λ_min (Minimum Criterion) | 0.045 | 28 | 0.75 (± 0.03) | Higher |

| λ_1se (1-SE Rule) | 0.128 | 12 | 0.73 (± 0.03) | Lower |

Table 2: Impact on Model Stability Across 100 Bootstrap Samples

| Selection Rule | Mean Biomarker Count | Selection Frequency of Top 5 Biomarkers (%) | Concordance Index SD (across samples) |

|---|---|---|---|

| λ_min | 31.2 | [78, 65, 60, 45, 42] | 0.041 |

| λ_1se | 14.7 | [95, 90, 88, 80, 78] | 0.027 |

Experimental Protocols

Protocol 1: Implementing 1-SE Rule for LASSO Cox Regression

- Data Preparation: Format your survival data as a matrix

X(standardized biomarkers) and a survival objecty(time, status). - Perform Cross-Validation: Use 10-fold cross-validation (

cv.glmnetfromglmnetpackage in R) with the family set to "cox" andtype.measure="deviance". - Extract Lambda Values: Retrieve

lambda.minandlambda.1sedirectly from thecv.glmnetoutput object. Thelambda.1seis computed as: λ where CV error =min(CV error) + 1*SE(min(CV error)). - Fit Final Model: Refit the LASSO Cox model on the entire training dataset using

lambda.1seas the penalty parameter. - Extract Biomarkers: Identify non-zero coefficient biomarkers from the final model for downstream validation.

Protocol 2: Validation and Stability Assessment

- Bootstrap Resampling: Generate 100 bootstrap samples from the original dataset.

- Repeated CV & Selection: On each sample, repeat Protocol 1, recording biomarkers selected by both λ rules.

- Frequency Analysis: Calculate the percentage of bootstrap iterations in which each biomarker is selected (see Table 2).

- Performance Assessment: Evaluate the model derived from the

lambda.1seon an independent validation cohort using the concordance index (C-index) and Kaplan-Meier analysis of risk groups.

Mandatory Visualization

Title: Lambda Selection Logic for Parsimonious Biomarkers

Title: 1-SE Rule Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for LASSO Cox & 1-SE Rule Analysis

| Item | Function/Description |

|---|---|

| R Statistical Environment | Open-source platform for statistical computing and graphics. |

glmnet R Package |

Essential for fitting LASSO, ridge, and elastic-net models for Cox and other families. Computes cross-validated λ. |

survival R Package |

Provides functions for survival analysis, including creating survival objects and calculating the Cox model. |

| High-Performance Computing (HPC) Cluster / Cloud | Facilitates bootstrap resampling and large-scale cross-validation analyses computationally efficiently. |

| Bioconductor Annotation Packages | Maps selected biomarker identifiers (e.g., gene symbols) to biological pathways and functions for interpretation. |

| Integrated Development Environment (IDE) | RStudio or VS Code for scripting, debugging, and version control (Git) of the analysis pipeline. |

This document serves as detailed Application Notes and Protocols within a broader research thesis focused on developing a robust pipeline for LASSO Cox regression in prognostic biomarker selection. A core assumption of the standard Cox model is proportional hazards (PH). In biomarker research, this assumption is frequently violated, as the prognostic effect of a biomarker (e.g., a gene expression signature, a circulating protein) may diminish, increase, or even reverse over time. Naively applying LASSO-Cox under non-proportional hazards (NPH) can lead to biased coefficient estimates, incorrect biomarker selection, and ultimately, unreliable prognostic models. This necessitates specific adaptations in both variable selection and model estimation phases.

Table 1: Common NPH Patterns in Biomarker Research

| Pattern | Description | Typical Biomarker Example | Impact on Standard Cox |

|---|---|---|---|

| Early Effect | Strong initial risk difference that attenuates or disappears. | Biomarker of treatment response (e.g., initial tumor shrinkage). | Hazard Ratio (HR) biased towards 1; loss of power. |

| Delayed Effect | Effect emerges only after a certain latency period. | Immuno-oncology biomarkers (e.g., immune-related adverse events signaling later benefit). | Missed significant association. |

| Crossing Hazards | Risk ordering reverses over time (e.g., treatment harms short-term but benefits long-term). | Biomarkers for aggressive vs. indolent disease subtypes. | Estimated HR is meaningless average. |

| Decaying Effect | Effect size decreases monotonically over time. | Biomarker of residual disease post-surgery. | Underestimation of early risk. |

Table 2: Statistical Methods for Handling NPH

| Method | Core Principle | Key Advantage for Biomarker Selection | Key Limitation |

|---|---|---|---|

| Time-Dependent Coefficients | Model β as function of time: β(t) = β * g(t) (e.g., linear, log, piecewise). | Directly models temporal effect change; interpretable. | Requires specification of g(t); can overfit. |

| Stratified Cox Model | Stratifies by biomarker level; allows different baseline hazards per stratum. | No PH assumption within strata; simple. | Cannot estimate biomarker's HR; loses power if many strata. |

| Additive (Aalen) Model | Models cumulative hazard additively with time-varying coefficients. | Fully non-parametric; no PH assumption. | Less standard in biostatistics; software less common. |

| Landmark Analysis | Fits separate Cox models at pre-specified "landmark" times post-baseline. | Intuitive; avoids time-varying bias. | Discards data; choice of landmark is arbitrary. |

| Joint Models | Jointly models longitudinal biomarker trajectory and time-to-event. | Uses full biomarker history; handles measurement error. | Computationally intensive; complex implementation. |

Experimental Protocols for NPH Assessment & Adaptation

Protocol 3.1: Diagnostic Testing for NPH in a Biomarker Cohort

Objective: To formally test the PH assumption for candidate biomarkers prior to selection via LASSO-Cox. Materials: Time-to-event dataset with survival time, event status, and standardized biomarker values (e.g., RNA-Seq counts normalized to TPM). Procedure:

- Fit Univariable Cox Models: For each pre-filtered biomarker, fit a standard Cox model:

h(t|X) = h₀(t) exp(βX). - Apply Schoenfeld Residuals Test:

- Extract Schoenfeld residuals for each biomarker.

- Perform a global test (Grambsch-Therneau test) via

cox.zph()function in R (survivalpackage). A significant p-value (<0.05) indicates violation of the PH assumption for that biomarker. - Visually inspect plots of scaled Schoenfeld residuals vs. time. A non-flat trend suggests time-varying effect.